Last years’s lab guest and long-time collaborator Carolyn McGettigan has put out another one:

Speech comprehension aided by multiple modalities: Behavioural and neural interactions

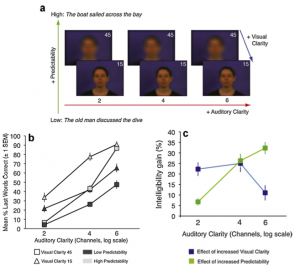

I had the pleasure to be involved initially, when Carolyn conceived a lot of this, and when things came together in the end. Carolyn nicely demonstrates how varying audio and visual clarity comes together with the semantic benefits a listener can get from the famous Kalikow SPIN (speech in noise) sentences. The data highlight posterior STS and the fusiform gyrus as sites for convergence of auditory, visual and linguistic information.

References

- McGettigan C, Faulkner A, Altarelli I, Obleser J, Baverstock H, Scott SK. Speech comprehension aided by multiple modalities: behavioural and neural interactions. Neuropsychologia. 2012 Apr;50(5):762–76. PMID: 22266262. [Open with Read]