I am very proud to announce our first paper that was entirely planned, conducted, analysed and written up since our group has been in existence. Julia joined me as the first PhD student in December 2010, and has since been busy doing awesome work. Check out her first paper!

Auditory skills and brain morphology predict individual differences in adaptation to degraded speech

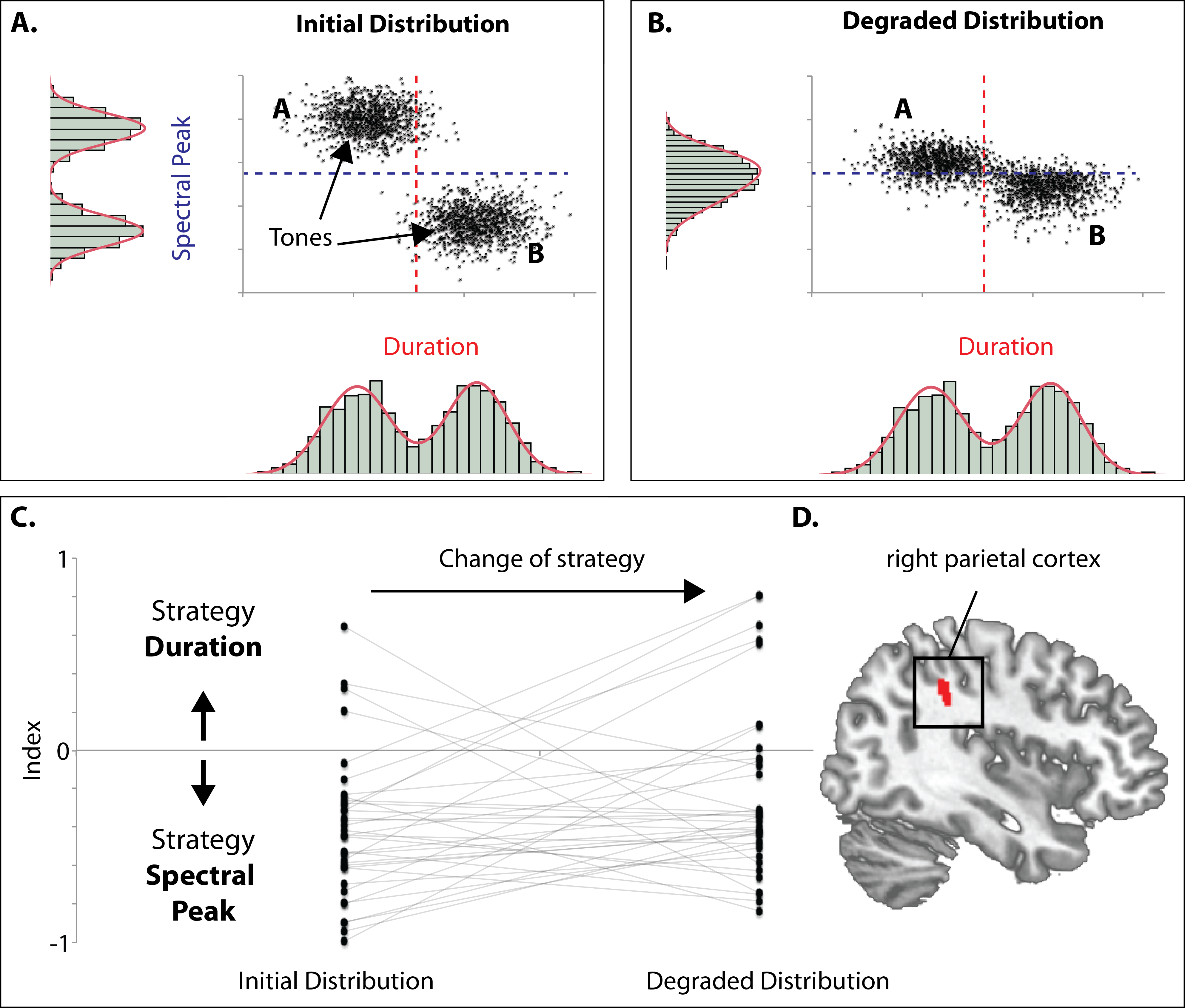

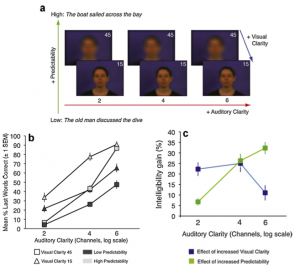

Noise-vocoded speech is a spectrally highly degraded signal, but it preserves the temporal envelope of speech. Listeners vary considerably in their ability to adapt to this degraded speech signal. Here, we hypothesized that individual differences in adaptation to vocoded speech should be predictable by non-speech auditory, cognitive, and neuroanatomical factors. We tested eighteen normal-hearing participants in a short-term vocoded speech-learning paradigm (listening to 100 4- band-vocoded sentences). Non-speech auditory skills were assessed using amplitude modulation (AM) rate discrimination, where modulation rates were centered on the speech-relevant rate of 4 Hz. Working memory capacities were evaluated, and structural MRI scans were examined for anatomical predictors of vocoded speech learning using voxel-based morphometry. Listeners who learned faster to understand degraded speech showed smaller thresholds in the AM discrimination task. Anatomical brain scans revealed that faster learners had increased volume in the left thalamus (pulvinar). These results suggest that adaptation to vocoded speech benefits from individual AM discrimination skills. This ability to adjust to degraded speech is furthermore reflected anatomically in an increased volume in an area of the thalamus, which is strongly connected to the auditory and prefrontal cortex. Thus, individual auditory skills that are not speech-specific and left thalamus gray matter volume can predict how quickly a listener adapts to degraded speech. Please be in touch with Julia Erb if you are interested in a preprint as soon as we get hold of the final, typeset manuscript.

[Update#1]: Julia has also published a blog post on her work.

[Update#2] Paper is available here.

References

- Erb J, Henry MJ, Eisner F, Obleser J. Auditory skills and brain morphology predict individual differences in adaptation to degraded speech. Neuropsychologia. 2012 Jul;50(9):2154–64. PMID: 22609577. [Open with Read]

Noise-vocoded speech is a spectrally highly degraded signal, but it preserves the temporal envelope of speech. Listeners vary considerably in their ability to adapt to this degraded speech signal. Her […]